Setting up a client/server visualization pipeline You can now navigate to the case folder and load the case as described above. On the biggpu node you should see: Waiting for client. Launch pvserver in your case directory: # pvserver -server-port=11111įrom your local Linux/MacOS machine type the following command to set up the tunnel to the allotted biggpu node: # ssh -L :11111 example, if you have logged onto blueslogin4 to launch your interactive job and your allotted node number is bg2 then your command will be: # ssh -L 11111::11111 Paraview from your local machine and connect to the blueslogin node ( blueslogin4 in the above example) as explained in the first section of this page. SSH into the biggpu node allocated to your job (say bg2): # ssh Ĭhange to bash shell if you aren’t already using it: # bash You should see something like: JOBID PARTITION NAME USER ST TIME NODES NODELIST(REASON) Once your job starts, check the node number granted to your job: # squeue -u $USER If a node is available and your job starts, you should see something like: salloc: Granted job allocation Note: The biggpu partition may not have free nodes so you might not be able to start your interactive job immediately. Request an interactive job on 1 node of the biggpu partition (for 30 minutes as an example): # salloc -N 1 -p biggpu -t 00:30:00 -A

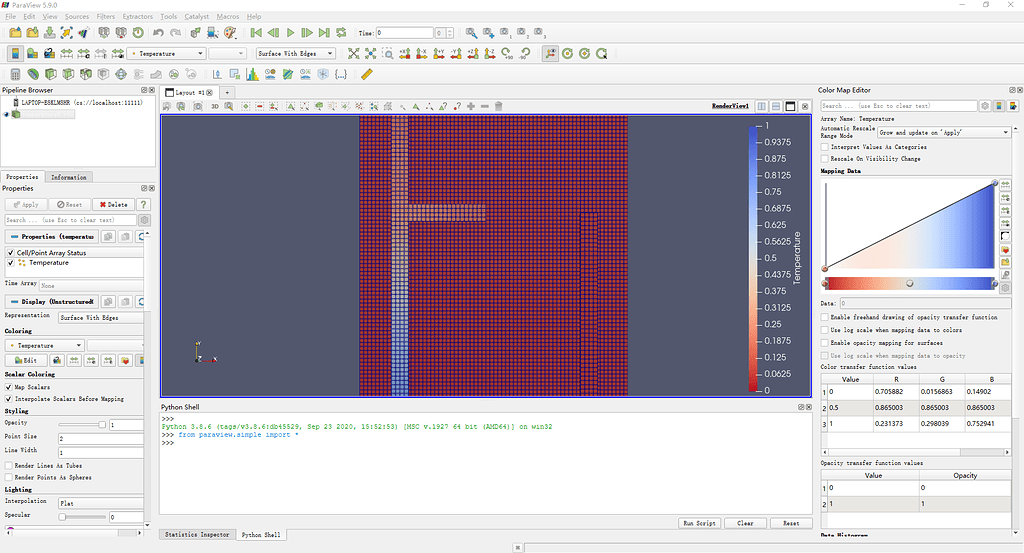

Note: If needed, please reference the first section of this page for any screenshots needed if you have trouble finding an option in the Paraview GUI.įirst, login to Blues for this example: # ssh note of the login node name and number that you end up on. The below is an example of how to do that for the biggpu partition. Users who need to visualize large problems will have to use a compute node with more resources, preferably the high memory node on the biggpu Slurm partition on Blues (this requires time allocation on Blues). Paraview Client-Server to an LCRC Compute Node (GPU Example) Your solution files should now be running in client-server mode. Open a case file clicking on the icon marked in the red circle below: Once connected, you should be able to open your solution files in your case directory on Bebop (since pvserver was launched from it). To verify your connection was successful (aside from no error messages locally), you should now see a connection message on the window running pvserver (note the last line below): Waiting for client. Click on the server name where you have started pvserver (in this example it would be beboplogin3) and hit Connect: You could add multiple servers using the above procedure. This will take you to the next tab where you can hit Save to save the configuration (Startup Type is Manual which is the default setting):Īfter adding a server, you should see a panel as shown below. Set the name as one of the login nodes, beboplogin2, beboplogin3, etc. You should use the port number given to you from when you started the pvserver instance if different than below. Once installed, launch Paraview locally and set up the connection to connect to our server instance.Ĭlick on the icon for Connect (marked in red circle on the figure below) and then click on the Add Server tab:įill in the details as shown below. Create an SSH tunnel to the login node you started pvserver on: # ssh -L 11111:localhost:11111 on your local machine, download and install the Linux/MacOS Paraview version 5.4.0 (or the matching version from the Paraview module you loaded in the first step) from the Paraview website. Now, open new terminal on your local machine/desktop (Mac/Linux). You should get output similar to the following: Waiting for client.Īccepting connection(s): beboplogin3:11111 Launch pvserver in your case directory: # pvserver You can verify that Paraview is loaded if you can produce this output: # which pvserver Now, load the Paraview module: # module load paraview/5.4.0 As mentioned for this example, it is beboplogin3, but may be different for you. In this first example, commands are shown for beboplogin3 and should be changed appropriately if you are using another login node.įirst, login to Bebop for this example: # ssh note of the login node name and number that you end up on. Paraview Client-Server to an LCRC Login Node Paraview itself should not be launched on any of the login nodes, but instead be run in client-server mode. Below are examples using Bebop and Blues (including the High Memory GPU nodes on Blues).

Here, we will outline how to use Paraview in client-server mode in LCRC from a local machine running Linux/MacOS.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed